The journey of language models in artificial intelligence has been a remarkable one, with the Generative Pre-trained Transformer (GPT) architecture standing as a pivotal milestone.

The evolution of GPT, from its foundational version to the unprecedented power of GPT-3, has transformed the landscape of natural language processing and opened new frontiers in AI applications.

GPT-1: Laying the Foundations

The inception of GPT marked a significant departure from traditional approaches to language processing. GPT-1 introduced the concept of pre-training and fine-tuning, leveraging a transformer-based neural network to understand the contextual relationships between words. Trained on vast amounts of publicly available text data, GPT-1 showcased promising language generation capabilities.

However, it had limitations, particularly in coherence and understanding context beyond local sentence-level dependencies.

GPT-2: Scaling Up and Generating Hype

GPT-2 emerged as a substantial leap forward in the evolution of the architecture. With a model size of 1.5 billion parameters, GPT-2 demonstrated an enhanced ability to capture global dependencies and generate coherent, contextually relevant text. The significant increase in model size enabled GPT-2 to learn from a massive corpus of text data, generating excitement and concerns simultaneously.

Due to fears of potential misuse, OpenAI initially restricted the release of GPT-2, highlighting the ethical considerations associated with powerful language models.

GPT-3: Unleashing Unprecedented Power

GPT-3 represents the pinnacle of the GPT architecture, pushing the boundaries of language generation to unprecedented levels. Boasting a staggering 175 billion parameters, GPT-3 is the largest and most powerful language model to date.

Its exceptional ability to understand and generate human-like text surpasses its predecessors in coherence, context comprehension, and creative responses. GPT-3’s versatility spans a wide range of tasks, from language translation and question answering to creative writing and code generation.

GPT-3 Applications and Impact

The impact of GPT-3 is felt across various industries and domains. In natural language processing, it has revolutionized chatbots, virtual assistants, and language translation services. GPT-3’s influence extends to content generation, where it empowers professionals to automate the creation of engaging and persuasive text.

In medical research, GPT-3 aids in analyzing complex datasets, providing valuable insights for disease diagnosis and treatment planning. Moreover, GPT-3 has ventured into creative realms, generating art, music, and storytelling, showcasing its potential to augment human creativity and imagination.

Future Directions and Ethical Considerations

The evolution of GPT architecture suggests a trajectory towards even larger model sizes and enhanced capabilities. Future iterations are likely to further improve language understanding, coherence, and creative capabilities.

However, ethical considerations surrounding responsible use, potential misinformation, and biased outputs remain paramount. Researchers and developers must continue to explore ways to mitigate these risks, ensuring that the power of GPT-3 is harnessed for the benefit of society while upholding principles of transparency, fairness, and accountability.

In conclusion, the evolution of the GPT architecture represents a transformative journey in the realm of language generation, unlocking new possibilities and reshaping the landscape of artificial intelligence. As the technology continues to advance, a careful balance between innovation and ethical considerations will be essential to harness the full potential of GPT for the betterment of humanity.

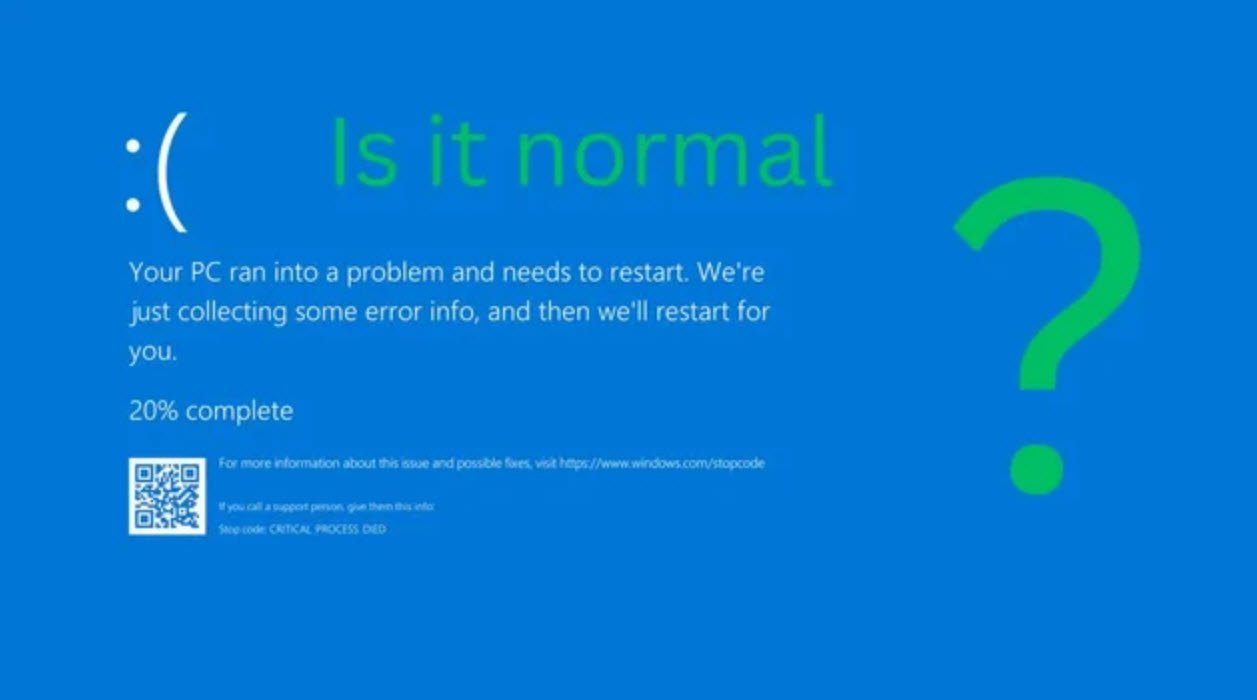

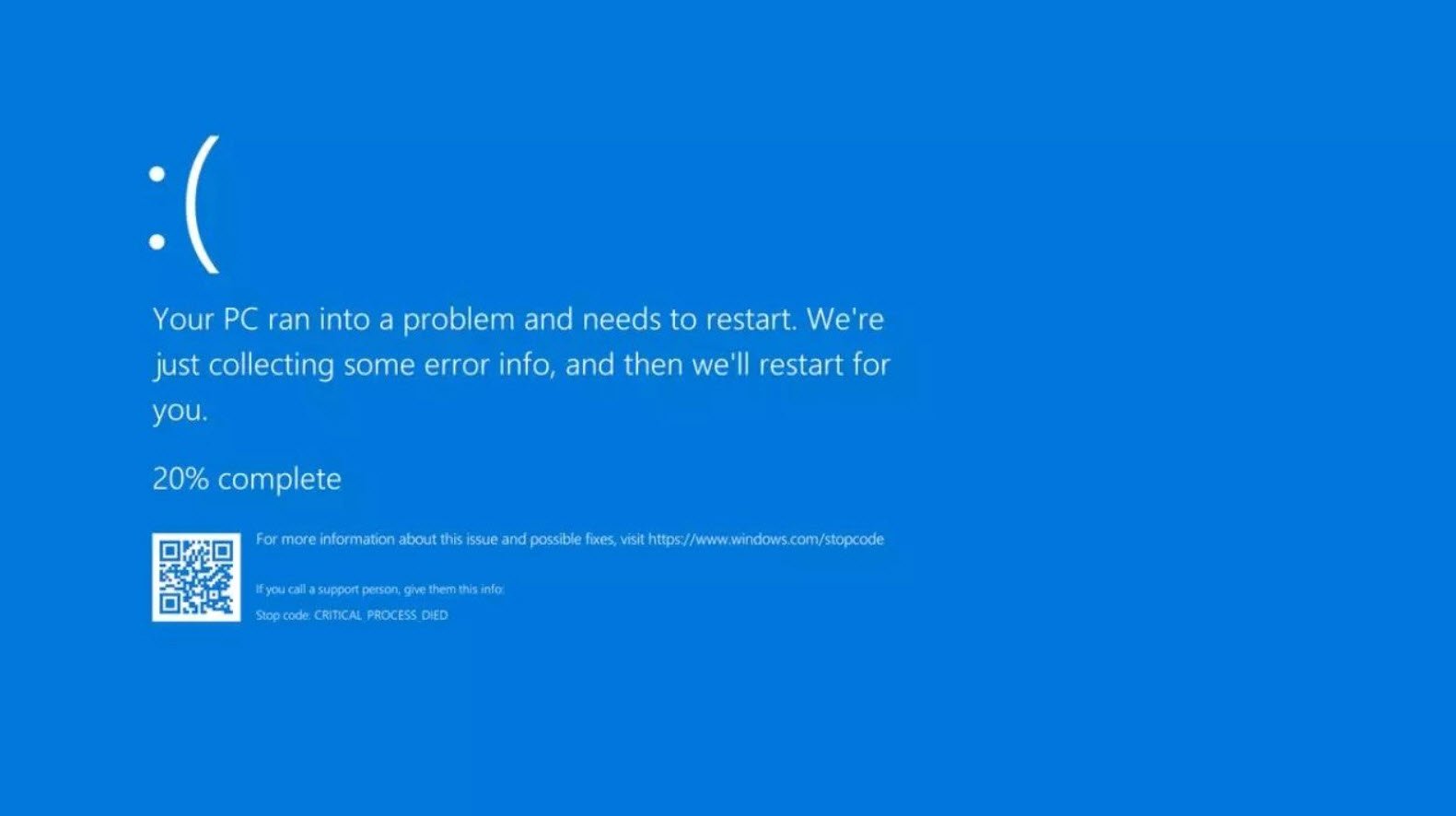

You may also like:- How To Fix the Crowdstrike/BSOD Issue in Microsoft Windows

- MICROSOFT is Down Worldwide – Read Full Story

- Windows Showing Blue Screen Of Death Error? Here’s How You Can Fix It

- A Guide to SQL Operations: Selecting, Inserting, Updating, Deleting, Grouping, Ordering, Joining, and Using UNION

- Top 10 Most Common Software Vulnerabilities

- Essential Log Types for Effective SIEM Deployment

- How to Fix the VMware Workstation Error: “Unable to open kernel device ‘.\VMCIDev\VMX'”

- Top 3 Process Monitoring Tools for Malware Analysis

- CVE-2024-6387 – Critical OpenSSH Unauthenticated RCE Flaw ‘regreSSHion’ Exposes Millions of Linux Systems

- 22 Most Widely Used Testing Tools